Trailer: Results from CFHTLS

Apologies for the long radio silence – we’ve been hunkered down analyzing your classifications and writing up the results as a number of research publications. We’re currently in the process of posting two papers from the CFHTLS project, but one (that describes the system) is stuck in the works for being too massive. (Insert gravitational lensing joke here.)

So, we’ll post a complete overview of all our recent progress tomorrow, but for now, here’s a trailer: check out the CFHTLS results paper. New lenses!

New Year, New Data: Hunting for lenses in the infrared with VICS82

Happy New Year everyone! We’re starting 2014 off with a bang, with a brand new dataset, and hopefully a whole new army of spotters who’ll have heard about Space Warps from the BBC Stargazing Live programmes. Welcome to Space Warps, you guys 🙂

So how about this new data then? Here’s an example gravitational lens from the VICS82 infrared survey – and here’s PI Jim Geach of the University of Hertfordshire to explain the survey.

Jim says: VICS82 stands for “VISTA-CFHT Stripe 82”, and is the largest near-infrared imaging survey of its kind, mapping nearly 200 square degrees of the Sloan Digital Sky Survey ‘Stripe 82’ – a narrow strip of sky that is the deepest part of the SDSS. VICS82 is using two 4-m class telescopes fitted with large-format near-infrared cameras: the Canada-France-Hawaii Telescope (atop Mauna Kea in Hawaii) and the VISTA survey telescope in the Chilean Atacama.

Over the last few years, Stripe 82 has received much attention from a wide range of different telescopes, covering the millimetre and radio bands, through the optical and infrared and (soon) high-energy x-rays. Its location along the celestial equator makes the Stripe a great target for facilities in both the northern and southern hemispheres – in fact, it’s shaping up into the first of a new generation of very large and deep extragalactic survey fields. Previously there has been a compromise between survey depth and sky area that has limited the size of the fields we can observe if we want to study the distant Universe (you can go very deep and therefore see very far, but only over very small patches of sky – like the Hubble Deep Field). But with ever-improving sensitivity and mapping capabilities in instrumentation right across the electromagnetic spectrum we’re now able to map much larger areas to much deeper depths than every before. While certainly not as deep as the HDF, VICS82 is the deepest near-infrared survey that exists for the size of the sky it has imaged, and can see normal galaxies out to a redshift of about 1 or so, when the Universe was roughly half its present age. It can see quasars out to much higher redshifts – these objects shine like beacons across the Cosmos.

So, one of the main goals of VICS82 is to survey a huge volume of the Universe to detect a mixture of massive and passive (ie, not star-forming) galaxies, and also dusty and actively star-forming galaxies and quasars. While VICS82 uses wavebands that complement the existing SDSS imaging (improving photometric redshifts for example), some of the objects detected by VICS82 are expected to be very faint or even invisible in the current optical imaging of the Stripe, showing up only at the longer wavelengths (1-2 microns) probed by VICS82. We call these systems ‘red’, and they are very important to consider in our census of galaxies if we are to properly piece-together the story of galaxy evolution.

With SpaceWarps we hope to identify examples of rare ‘red arcs’ which might be very distant, highly reddened galaxies lensed by a foreground mass like a group or cluster. If these galaxies contain lots of dust, their visible light might be completely extinguished internally, and so would not be detectable at shorter wavelengths, but the infrared photons can more easily escape. If we can find even a few of these systems, then the possibilities for detailed follow-up work are tremendous, since – when armed with a model of the lensing mass – we can really dissect the galaxy, exploring its inner workings in a way that is simply impossible without the benefit of lensing.

What we’ve done is select about 40,000 images from the survey, that each contain either a possible lens (ie a massive galaxy or group of galaxies), or a possible quasar source. The images are a little fuzzy, because the night sky is so bright in the infrared – this makes it quite difficult for computers to detect the faint lensed features. Sounds like a job for Space Warps! Good hunting, and thanks for all your contributions – see you on Talk!

Seasons Greetings from Space Warps: Some Refined Lenses, and a Big Thank You!

Well, Space Warps Refine has been running for just over a week, and it’s had a fantastic response from you all. THANK YOU! With over 140,000 classifications of the 3679 images, we have very good data on almost all of them – and some exciting new lens candidates are popping out of the pipeline!

Here’s one: a nice example of a small lensing cluster, with a longish gravitational arc. We think this probably wasn’t picked up by the robotic ArcFinder because it has such low surface brightness, and the field is so crowded.

Here’s another good one: a binary lens? The blue arc looks like its composed of three merging images of a small blue background galaxy, strongly lensed by the lower red galaxy. But what’s that yellow object? It’s a little bigger than a star would be, so it’s probably another massive galaxy – and its colour suggests that it’s at a lower redshift than the lens galaxy. If it is in the foreground, then it is lensing both the blue arc, and the red lens! So-called “compound lenses” like these are very interesting: we might be able to learn about the mass of the yellow galaxy as well as the red one. With enough examples of systems like this we might even be able to say something about how fast the Universe is expanding… Tom’s written a paper on this that you might find interesting.

New lenses are not the only things turning up from the Refinement analysis: there are a very small number of false positives sneaking through, but as you might expect, they are pretty convincing imposters! Follow the links in the images’ comments feeds to see the problems with this apparent Einstein Ring, and this nice looking bright arc!

We’ll leave the images on Space Warps Refine up over the holiday period to give you the chance to classify as many of them as you want, and in the New Year we’ll do the final analysis of their probabilities taking all your votes into account. Just as we had hoped for, it looks very much like the outcome will be a short list of very good lens candidates, ranked by probability. An excellent publishable result! When we have the final list, we’ll be taking it to Talk, and starting the process of capturing, with your help, all your investigations of them, including the zoomed in views that show the lens configurations best, and the models that you have been making.

So, it’s been a wonderful first year for Space Warps: a more or less completed first project, and some exciting new lens candidates. Next year, we’ll be back with some new survey data – a new challenge for you.

Thanks very much for all your contributions – we hope you all have a very good holiday season!

Phil, Aprajita and Anupreeta

Coming soon: narrowing down the candidate list with Space Warps Refine

After a huge effort by all the Space Warps volunteers, who have together contributed over 10 million classifications, we have very nearly finished working through the 431,550 images of the CFHT Legacy Survey. A remarkable achievement!

It looks as though the result of this search will be a sample of just over 3300 gravitational lens candidates. Some of them are lenses that we already know about, from various automated searches, while some of them will be new discoveries. However, most will be “false positives” – objects that look like lenses, but actually are not. How do we go about sorting the wheat from the chaff?

The answer is: take a second look! We are setting up the SW website to enable a new round of classifications, one where we ask you to take a really good look at each image, and use all your lens-spotting experience to assess it – and, crucially, only mark it if you really think you see a gravitational lens. We are trying to refine the sample, to leave us with us a sample of candidates that have a very high probability of being lenses. We’ll always have the larger, complete sample from the first round; what we want now is a pure sample of lens candidates to present to the rest of the astronomical community.

To help you in this task, we’re making a few changes around the site. The first is that we are replacing the old “dud” training images (the ones where know there is no lens) with some more difficult images, that contain example false positives that we have identified. The challenge is not to be fooled by them, and only mark the objects you really think are lenses! Likewise, we’re selecting only the hardest sims to include in Space Warps Refine, to keep you on your toes… Secondly, we’re updating the Spotter’s Guide to include some new types of false positive that you’ve pointed out to us over the last few months: red stars are a good example, that we didn’t include in the original guide. We’re also adding to the Spotter’s Guide a gallery of known lenses for you to browse, to see the kinds of features we’re after. Some of the differences in appearance between gravitational lenses and spiral galaxies, mergers, and so can be quite subtle, so we think the Spotter’s Guide will be even more important in this refinement phase than ever. Likewise, it’s likely that you’ll want to call the Quick Dashboard into action more often than before, as you inspect the candidates. Finally, to make it obvious that the site is set up for the refinement, where more discernment is required, we’re painting it bright orange 🙂

It won’t take long for us all to look through the candidates, even when taking more time to make a considered judgement: but it should be fun, since every single image will contain something worth looking at. And we should have the final results from Space Warps very soon after! We’ll send an email out to the community when the reconfigured website is ready to go and the first classification phase is complete, which should be very soon now. In fact, you can help speed us along by making one last classification push 🙂 Thanks again for all your contributions!

Digging Deeper with the Dashboard

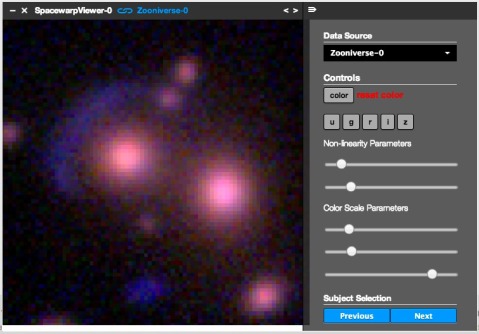

While checking out your lens candidates in Talk, I often found myself wanting to take a closer look at the images – usually to see if I can see a counter-image to the main lensed arc that you’ve flagged. This is not easy, because these counter-images are often fainter, and more central – closer to the lensing object – and this is where the light from the lens galaxy is brightest. In almost all cases though, the lens galaxy (or galaxies) are yellow-red in colour, which means they are bright in the CFHT r, i and z bands, but not so bright in the g-band. Meanwhile, the lensed features are usually blue – so brightest in the g-band. Sometimes it’s useful to be able to look at the different bands’ images individually, while sometimes you just want to be able to change the color contrast and brightness in the composite image. The Space Warps development team have given us a tool for doing exactly this – so in this blog post I thought I’d show you a couple of examples of where I’ve found it useful, and how you can use it yourself.

Here’s a good example: some of the science team have teamed up with a few other spotters to try modeling one of our best candidates, ASW0004dv8 – you might have seen them discussing their progress here. I wondered if we could see a faint counter-image to the big arc on the opposite side of the yellow galaxy causing it – so opened the image in the dashboard, using the button marked “Open In Tools” in the top righthand corner of the object page:

After zooming in (by scrolling) and re-centering (by dragging the image), and then playing around a bit with the “nonlinearity parameters” (which are like brightness and contrast), and the colour scales (to bring out the blues at the expense of the reds), I got the following image:

I reckon I can see a very faint blue counter image, buried in the left-hand bright red lens galaxy, at about 4 o’clock! See what you think – you too can see my dashboard here. Isn’t that cool? You can show someone your dashboard any time, just by posting the link back into Talk, like this:

For me, this is the best thing about this new tool: it allows us first to focus on a particular object in an image, and then show each other what we can see.

Here’s another example: do you think this is an edge-on lens, in image ASW0005q6x? How about now, in just the g-band image?

Have fun, spotters!

What happens to your markers? A look inside the Space Warps Analysis Pipeline

Once you hit the big “Next” button, you’re moving on to a new image of the deep night sky – but what happens to the marker you just placed? And you may have noticed us in Talk commenting that each image is seen by about ten people – so what happens to all of those markers? In this post we take you inside the Space Warps Analysis Pipeline, where your markers, get interpreted and translated into image classifications and eventually, lens discoveries.

The marker positions are automatically stored in a database which is then copied and sent to the Science Team every morning for analysis. The first problem we have to face at Space Warps is the same one we run into in life every day – namely, that we are only human, and we make mistakes. Lots of them! If we were all perfect, then the Space Warps analysis would be easy, and the CFHTLS project would be done by now. Instead though, we have to allow for mistakes – mistakes that we make when we’ve done hundreds of images tonight already and we’re tired, or mistakes we make because we didn’t realise what we were supposed to be looking for, or mistakes we make – well, you know how it goes. We all make mistakes! And it means that there’s a lot of uncertainty encoded in the Space Warps database.

What we can do to cope with this uncertainty is simply to allow for it. It’s OK to make mistakes at Space Warps! Other people will see the same images and make up for them. What we do is try and understand what each volunteer is good at: Spotting lenses? Or rejecting images that don’t contain lenses? We do this by using the information that we have about how often each volunteer gets images “right”, so that when a new image comes along, we can estimate the probability that they got it “right” that time. This information has to come from the few images where we do actually know “the right answer” – the training images. Each time you classify a training image, the database records whether you spotted the sim or caught the empty image, and the analysis software uses this information to estimate how likely you are to be right about a new, unseen survey image. But this estimation process also introduces uncertainty, which we also have to cope with!

We wrote the analysis software that we use ourselves, specially for Space Warps. It’s called “SWAP”, and is written in a language called python (which hopefully makes it easy for you to read!) Here’s how it works. Every volunteer, when they do their first classification, is assigned a software “agent” whose job it is to interpret its volunteer’s marker placements, and estimate the probability of the image at hand containing a gravitational lens. These “agents” are very simple-minded: in order to make sense of the markers, we’ve programmed them to make a basic assumption: that they can interpret their volunteer’s classification behavior using just two numbers, the probabilities of being right when a lens is present, and of being right when a lens is not present, which they estimate using your results for the training images. The advantage of working with such simple agents is that SWAP runs quickly (easily in time for the next day’s database dump!), and can be easily checked: it’s robust. The whole collaboration of volunteers and their SWAP agents makes up a giant “supervised learning” system: you guys train on the sims, and the agents then try and learn how likely you are to have spotted, or missed, something. And thanks to some mathematical wizardry from Surhud, we also track how likely the agents are to be wrong about their volunteers.

What we find is that the agents have a reasonably wide spread of probabilities: the Space Warps collaboration is fairly diverse! Even so, *everyone* is contributing. To see this we can plot, for each image, its probability of containing a lens, and follow how this probability changes over time as more and more people classify it. You can see one of these “trajectory” plots above: images start out at the top, assigned a “prior” probability of 1 in 5000 (about how often we expect lenses to occur). As they are classified more and more times they drift down the plot, and either to the left (the low probability side) if no markers are placed on them, and to the right (the high probability side) if they get marked. You can see that we do pretty well at rejecting images for not containing lenses! And you can also see that at each step, no image falls straight down: every time you classify an image, its probability is changed in response.

Notice how nearly all of the red “dud” images (where we know there is no lens) end up on the left hand side, along with more than 99% of the survey images. All survey images that end up to the left of the red dashed line get “retired” – withdrawn from the interface and not shown any more. The sims, meanwhile, end up mostly on the right, as they are correctly classified as lenses: at the moment we are only missing about 8% of the sims, and when we look at those images, it does turn out they are the ones containing the sims that are the most difficult to spot. This gives us a lot of confidence that we will end up with a fairly complete sample of real lenses.

Indeed, what do we expect to find based on the classifications you have made so far? We have already been able to retire almost 200,000 images with SWAP, and have identified over 1500 images that you and your agents think contain lenses with over 95% probability. That means that we are almost halfway through, and that we can expect a final sample of a bit more than 3000 lens candidates. Many of these images will turn out not to contain lenses (a substantial fraction will be systems that look very much like lensed systems, but are not actually lenses) – but it’s looking as though we are doing pretty well at filtering out empty images while spotting almost all the visible lenses. Through your classifications, we achieving both of these goals well ahead of our expectations. Please keep classifying and there will be some exciting times ahead!

Sim City

Anupreeta, Surhud and Phil

Simulations! They’re everywhere in Space Warps, sneaking into the images and popping up messages all over the place. They’ve sparked a fair bit of discussion in Talk – lots of people like them, some people find they get in the way, and we hear the same few questions a lot. In this post we have a go at answering them!

Q: Why have we put all these simulated lenses in the survey images?

A: The sims serve two purposes. The first one is training: since many volunteers in the Space Warps community may not have seen gravitational lensing in action before, the sims are there to help you become familiar with what to look for. They give you some first-hand experience in identifying gravitational lenses, and show you the common (and some uncommon) configurations of multiple images that gravitational lenses can form.

The second purpose is driven by the science. We would like to find more new examples of gravitational lens systems that are known to exist in nature, but are difficult to detect. And because they are difficult to detect, we expect to miss some of them. That’s OK (we’re only human!), but we’d at least like to understand which lenses we missed, and why! Some gravitational lenses could be missed for mundane reasons, such as lying close to the border of the image, for example. Other lenses which may have formed arcs could be missed if these arcs have very low brightness, or, because the lensed features are hidden in the light of the lensing galaxy, or if these lensed features are too red. Our aim is not to just discover lenses, but to be thorough and quantify what sort of lenses we might miss. How well we find the sims will tell us how many lenses we likely missed, and which ones.

Sims are made using real massive galaxies – which are clustered! Here the sim-making robot has placed two simulated lensed arc systems in the same field as a real gravitational lens…

Q: How did we make the simulated lens systems?

A: As our goal of making sims is to generate a reasonable training sample that is also fairly realistic, we use realistic models both for the background sources and foreground galaxies. These models have some key properties that are largely enough to describe the wide range of lens systems that we have seen so far in the Universe. The mass, distance and shape of the foreground galaxies, and the colors, sizes, brightness and distances of the background galaxies and quasars all play an important role. We use realistic values of these key properties (and their interdependence), as measured by other astronomers in surveys like the CFHTLS. For each sim, we select a massive object from the CFHTLS catalog, and ask, what would this object’s image look like if there was a source behind it being gravitationally lensed? It’s quite difficult to select massive objects (measuring mass is one of the reasons we want to find more lenses!), but we can make an approximation by selecting bright, red-colored objects (which for certain ranges of brightness and color are mostly massive elliptical galaxies). We then select a source, either from the CFHTLS catalog of faint galaxies (which includes estimates of brightness, colour, size and also distance), or from the known distribution of quasar brightnesses and colours.

To mimic the distorting and magnifying effects of gravitational lenses, we create lens models from our understanding of the theory of gravitational lensing combined with observations of known lenses. We know of several hundred gravitational lenses now, and it turns out that in almost all cases, the details of the lensing effect can be described using quite simple models for the lens mass distributions. These lens models are then used to simulate the arcs, doubles and quads you see in the Space Warps images: in each pixel of the simulated image we compute the value of the brightness of the lensed features predicted by the model.

The final step is to make sure that the simulated lensed features appear as they would in a real image. The Space Warps images were all taken with the Canada-France-Hawaii Telescope on Mauna Kea, and their resolution is limited mostly due to the atmosphere – we can tell how blurry the images are by looking at stars in the images. It turns out that the CFHTLS images all have roughly the same resolution, so we blur the lensed features by the same amount. We then add noise, and overlay the simulated lensed features on top of the image that contains the massive object we selected, so that the image looks as realistic as possible.

Q: Should I mark all the simulated images, even if I have marked/seen them before? Why is this useful?

A: Marking sims is very important – the analysis of the Space Warps classifications depends on it! We’ll blog about this process soon, but the central point is this: when we present a new lens candidate found at Space Warps to the rest of the scientific community, we need to estimate how likely it is to be a real gravitational lens system. This is tricky: the Space Warps classifications come from citizen scientists who have various degrees of experience and skill. We expect some people to be good at spotting faint arcs, others might be good at searching all the way to the edges of the images, while others might be better at efficiently rejecting objects that look like lenses but are not. Our analysis software uses the simulated lens sample to quantify the collaboration’s expertise, and then assesses the likelihood of a lens candidate based on the classifications it has received. The uninteresting and less likely lens candidates are then “retired” from the database every day, so that we don’t have to look at them any more than necessary. Without the sims (and also the dud images, that are known not to contain any lenses), it would be much harder to estimate the likelihood of an image containing a lens, given its classifications. So please keep marking them, even if you’ve seen them before!

Q. Can’t I turn the sims off?

A. We thought about this – but when we were testing the site, we found that if we went for a long period without being shown a simulation, we started missing lenses because we were going too fast! So we decided to keep the sims in, albeit at a low frequency, to keep us on our toes!

Q: Why do some sims look a bit odd?

A: We use simple models to represent the lens and source galaxies; these simple models work fairly well in most cases, but sometimes they fail to capture some of the more unusual objects in the Universe. Since the whole process of generating sims is automated (so that we can make a large enough sample to get good statistics from) and we can only perform visual checks on a small sample, we do expect to have a few systems that may not look quite right.

Here are some of the most common failure modes:

(a) Simulated arcs around nearby spiral galaxies. These are listed as having the incorrect distance in the CFHTLS catalog: they have the right brightness for a massive galaxy, but that’s because they are near, and not actually massive! They are assigned larger distances as the colour of their bulges is very similar to that of massive elliptical galaxies further away. Such spiral galaxies would have very small Einstein radius – but in the simulation, the arcs are predicted to be too far away from the bulge (i.e. the centre of the spiral) for it to be a plausible lens.

(b) Abnormally thick arcs. This happens when the source is too big and bright to be a plausible background source. Again, this can happen if the source galaxy we drew from the CFHTLS catalog was listed with an inaccurate size.

(c) Wide separation lenses around tiny galaxies. This can happen if the galaxy we are using as a lens is listed in the catalog as being brighter than it is (most likely due to inaccuracies in the inferred distance to this galaxy).

Q: I see two lens systems in a single image (a combination of simulated and/or real lenses), what should I mark?

A: Please mark at least one lensed image for each lens system: we need the simulated lens to be marked so that the analysis software knows you saw it, but then marking any real lenses will register that object as a potential real lens candidate as well. (Also, see the FAQ page on Space Warps).

Q: How can I tell if I am marking a simulated or real image?

A: You shouldn’t be able to, as the simulated images should give you a good indication of what a real lens should look like! You’ll know as soon as you hit “Finished Marking” though, because the Space Warps system always gives feedback straight away.

Q: I see a simulated lens on top of a known real lens. What do I do?

A: We have tried to exclude the real lenses from the simulated lens sample, but unfortunately all real lenses were not successfully excluded (Apologies!). This is not a matter of concern though: we’ll re-inject the images that were used in making the sims into the Space Warps database, but without the simulated lenses. For the time being, please continue to mark any or all of the lensed images that you spot, irrespective of whether you think they are simulated or real.

The discussion of the sims in Talk has been really helpful – thanks for your questions, and for catching the problems mentioned above!

Engage!

Hooray! Space Warps is live, and the spotters are turning up in numbers. Check out the site at spacewarps.org – there’s a few little bugs that Anu, Surhud and the dev team are ironing out, but basically it’s looking pretty good! Thanks very much to everyone who’s helped out in the last few months – your feedback has been very useful indeed in designing a really nice, easy to use website that hopefully will enable many new discoveries. And to all of you who are new to Space Warps – welcome!

If you’re feeling really keen, why don’t you come and hang out in the discussion forum at talk.spacewarps.org? We’re starting to tag images to help organise them, and the more interesting conversations we have there, the more useful it will be for the newer volunteers. And of course, you can vote on the candidates spotted by other people, by making your own collection. Come and take part in the Space Warps collaboration!

PS. Aprajita and I will be making a special guest appearance on the regular Galaxy Zoo Hangout tomorrow – tune in for more slightly distorted spacetime chat!

Space Warps CFHTLS

Our first project is to search the 400,000 images of the Canada France Hawaii Telescope Legacy Survey, or CFHTLS – we’re asking people to spot gravitational lenses in its images, in order to find some new examples, and also to learn how to design automated lens detection systems to use in the future. I caught up with Jean-Paul Kneib, from the Strong Lenses in the Legacy Survey (SL2S) project, and Space Warps co-PI Anupreeta More to ask them to explain a bit more about it.

CFHTLS is a survey conducted with the CFHT 3.6m telescope using the Megacam camera. It targeted 4 patches of the sky, adding up to about 150 sq deg. That’s about 60 times smaller than the SDSS-DR8 imaging area, but it goes typically 2.5 magnitude (about 10 times) deeper than SDSS, with higher resolution images. The average seeing was 0.6 arcsec, compared to an average of 1.4 arcsec for the SDSS data. So, in short CFHTLS is a mini SDSS but focussed on the deeper Universe, which means it is a great survey in which to find strong lensing systems!

The original design was to measure what we call “cosmic shear” – that is, the tiny deformations that large scale structures produce on the appearance of faint galaxies. This cosmic shear measurement is used to put constraints on cosmological parameters. But similarly the strong lensing systems could also reveal us something about cosmology … but first we need to find them!

Various cosmological models of the Universe predict different numbers of galaxy clusters at various times, and also differences in how concentrated is the mass distribution within these massive structures. Both these factors affect how efficiently the galaxy clusters will produce highly magnified and distorted arcs. That means that the abundance of arcs in surveys like CFHTLS can be used in turn to understand which cosmological model best describes our Universe.Lens systems allow us to primarily understand the properties -like the mass – of the lensing galaxies. However, it is possible to derive extra constraints from certain types of lenses in order to learn more about the Universe – for example, its age. We see that quasars change their brightness over time; in a lensed quasar system, the different lensed images appear to vary at different times due to the different paths taken by the light rays to reach us. The time delay seen between these multiple images, combined with the speed of light through the lens, allows a measurement of distance to be made. By measuring these time delays accurately, we can measure distance, compare it with redshift, model the expansion of the Universe, and predict its age.

I mainly looked for arcs in the g-filter since the arcs look brighter in this filter than any other. This helped optimize the arc detection. As the CFHTLS imaging goes very deep compared to SDSS, we found a fainter sample of arcs. In order to contain the number of false positive detections, I had to apply some limits on some of the arc properties such as surface brightness, length-to-width ratio, curvature and area. These limits were essentially decided arbitrarily after some testing on a smaller known lens sample from the CFHTLS. However, it was not known beforehand how this might affect the completeness of the lens sample and the limits on which of the arc properties could be relaxed or made stricter. There are various factors to which a code is sensitive to e.g. a certain arc may satisfy most thresholds, but will go undetected because it happened to be located in an image region with high noise levels or was partially overlapping with a bright galaxy. People are less susceptible to these fluctuations when they look at images, and can cover a wider dynamic range in terms of arc properties and, simultaneously, assess the likelihood of an arc-like image of being a lensed image, given its color, shape, curvature, proximity and alignment with respect to a nearby lensing galaxy in a way which is not currently possible with Arcfinder code.

The results from the Space Warps are going to be interesting and exciting in many ways. In terms of improving the Arcfinder, Space Warps will provide a more comprehensive library of lenses – I hope the spotters will find the lenses that Arcfinder missed! By measuring the properties of these new lenses, we will be able to put together a better set of thresholds that would have increased the completeness and purity of the Arcfinder lens sample. It might be possible that some new lens properties that we haven’t thought of yet might prove more useful in terms of getting higher purity. It would be great to be able to improve the Arcfinder algorithm in this way.

A New Name, Debugging, and Some Mind Games

Spring is here, or at least coming along, and the Lens Zoo development tiger team is emerging from its incubator. Since just before Christmas we have been hard at work pulling together the many different pieces needed to make a Lens Zoo work well. This week, the Science Team is helping debug the identification interface that the Dev Team built, and then we’ll be ready to beta test it. It’s looking very cool. Following discussions here and elsewhere, we settled on the project name “Space Warps.” As Thomas J pointed out, with this name we won’t ever have to explain who “Len” is!

While all that is happening, we are also starting to think about how the other parts of the project might work. Once our spotters have identified an initial batch of lens candidates, we will have to figure out what to do with them all (and they will be numerous!). A good first check is to phone a friend: with the Talk system, we’ll be able to assemble collections of lens candidates for everyone to comment on. You can see this happening already, freestyle, in Galaxy Zoo Talk. We’ll be trying to come up with ways of making it easier to browse collections in Talk, and to be able to cast your vote on whether you think each object is a lens, or not.

But hang on: isn’t voting rather subjective? Well, yes and no. A key part of the lens-finding process is modeling, that is, figuring out whether the features we see in the image could actually be due to gravitational lensing. A minimum requirement for a successful lens candidate is that its images be explained by a plausible lens model! Fortunately, some initial lens modeling can be done mentally: this is why much of the site development effort so far has gone into the training material needed to help people understand what gravitational lenses look like, and how the arcs and multiple images are formed. Think about what you are doing when you make a judgement about a lens candidate: you are imagining how that image could have been formed, and to do that, you need a model of a gravitational lens in your head!

For the more difficult and ambiguous cases though, we’ll need to actually make some predicted images, using a computer model – so we’re thinking of other ways that we could enable this. Several members of the Science Team have written lens modeling software, we just need to make it possible for all of you to use it! More on this soon.